Neural Architecture Explained – For Non-Experts

Introduction

Welcome to the captivating world of artificial intelligence (AI), where machines learn and make decisions akin to human beings. At the core of AI—especially within deep learning models—is a pivotal concept: neural architecture. Whether you’re just beginning your journey or seeking to enhance your understanding, this blog post is designed for you. Here, we’ll simplify neural architecture basics in an engaging way, making complex AI concepts accessible and easy to grasp.

AI has transformed numerous industries over the years, thanks largely to visionaries like Geoffrey Hinton and groundbreaking organizations such as Google Brain and the Artificial Intelligence Institute. But what fuels these revolutionary models? It’s all about delving into the inner workings of neural networks—a foundational element for understanding deep learning models.

Understanding Neural Networks

Neural networks form the backbone of deep learning models, processing input data through various layers to generate predictions or classifications that mirror how our brains function. Let’s explore their mechanics and significance in AI advancements.

The Basics of a Neural Network

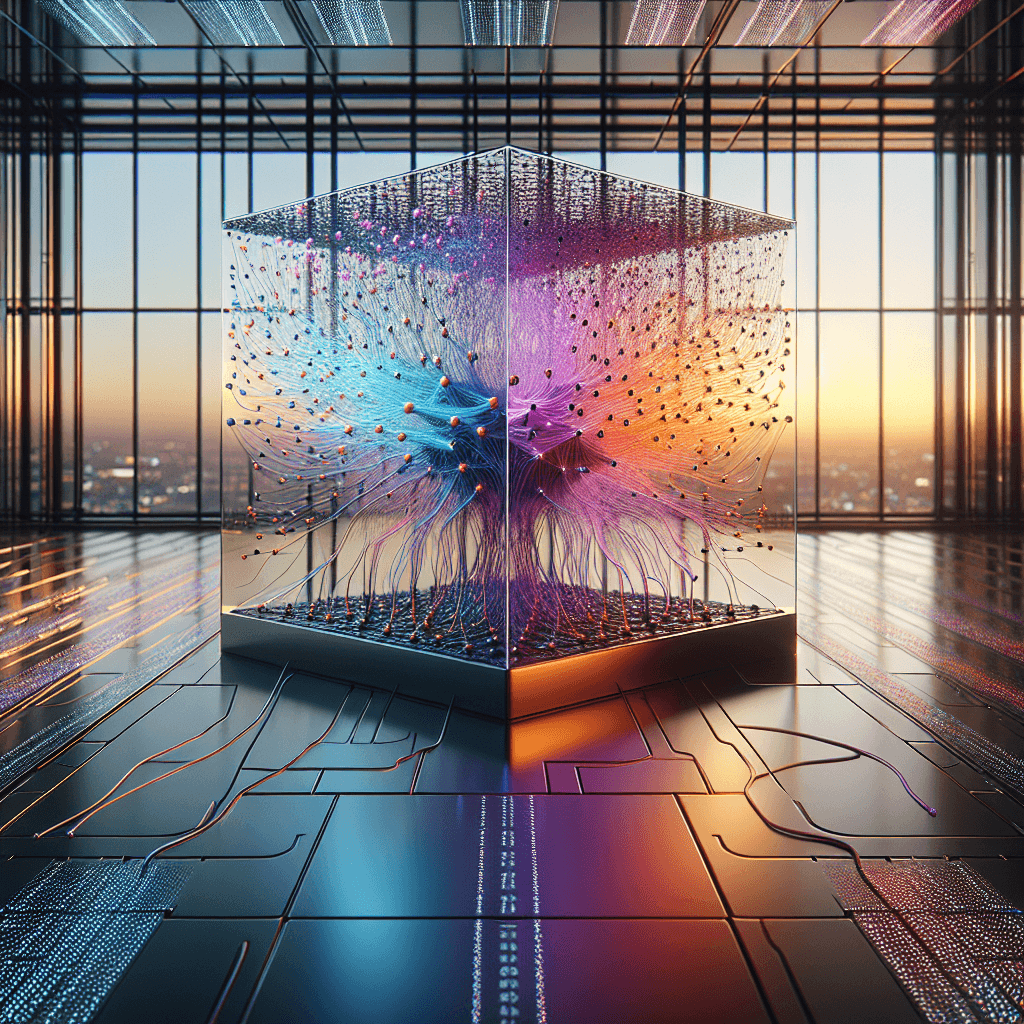

A neural network is essentially an interconnected web of nodes similar to the vast number of neurons found in the human brain. These networks are designed to identify patterns and interpret data by emulating brain-like operations.

- Input Layer: This layer serves as the entry point for raw data into the system.

- Hidden Layers: Here, complex computations and transformations occur on the input data.

- Output Layer: The final layer that produces predictions or classifications based on the processed information.

How Does a Neural Network Learn?

Learning in neural networks involves adjusting weights associated with connections between nodes to improve accuracy. Algorithms like backpropagation and gradient descent play crucial roles in this process, enabling iterative refinement of these weights and enhancing the network’s predictive capabilities over time.

Backpropagation and Gradient Descent

Backpropagation is an algorithm used for training feedforward neural networks. It calculates the gradient of the loss function with respect to each weight by the chain rule, effectively allowing the model to update its weights in a direction that minimizes error.

Gradient descent, on the other hand, is an optimization technique where the learning rate determines how much the weights should change during each iteration of training. This method iteratively adjusts parameters to reduce errors between predicted and actual outcomes.

The Structure of Neurons in a Neural Network

A neural network’s structure and function are inspired by the human brain—our brains consist of billions of interconnected neurons, much like how artificial neural networks utilize layers of nodes to simulate biological processes.

Key Components of Artificial Neurons

- Dendrites: These receive signals from other neurons.

- Cell Body (Soma): Processes incoming signals and determines their forwarding necessity.

- Axon: Transmits the processed signal to subsequent neurons.

In a neural network, these biological components are abstracted into mathematical operations that collectively enable learning and pattern recognition.

Geoffrey Hinton and The Evolution of Neural Networks

Deep learning owes much to pioneers like Geoffrey Hinton, whose contributions have significantly deepened our comprehension of neural networks. Often hailed as the “godfather of deep learning,” Hinton’s work on neural network algorithms has profoundly influenced AI concepts for beginners and experts alike.

Key Contributions by Geoffrey Hinton:

- Development of groundbreaking algorithms in backpropagation.

- Pioneering research into deep belief networks and convolutional neural networks (CNNs).

Hinton’s work has not only advanced theoretical knowledge but also practical applications. For instance, CNNs are now foundational to image recognition systems used in everything from medical diagnostics to autonomous vehicles.

The Role of Google Brain in Advancing AI

Google Brain, a leading entity in AI research, focuses on developing scalable and efficient deep learning models that address complex problems. Their work has led to innovations such as AlphaGo and BERT—pioneering achievements that showcase the potential of advanced neural architectures.

Impactful Projects by Google Brain:

- AlphaGo: A program demonstrating mastery in the game of Go, illustrating AI’s capability in strategic decision-making.

- BERT (Bidirectional Encoder Representations from Transformers): Enhances natural language understanding, revolutionizing tasks like translation and sentiment analysis.

These projects highlight how neural architecture can be applied to solve diverse challenges, pushing the boundaries of what machines are capable of achieving.

The Artificial Intelligence Institute’s Role

The Artificial Intelligence Institute plays a crucial role in shaping future AI technologies by conducting cutting-edge research and fostering collaborations across disciplines. Their work on ethical AI practices ensures that advancements benefit society while addressing potential risks associated with AI deployment.

Educational Initiatives and Collaborations

Through educational initiatives, the institute aims to make neural architecture basics more accessible. Workshops, seminars, and online courses are offered to demystify deep learning models for students and professionals alike.

Applications of Neural Networks in Various Industries

Neural networks have found applications across various industries, revolutionizing how tasks are approached and executed:

- Healthcare: Used for diagnosing diseases by analyzing medical images with high accuracy.

- Finance: Assists in detecting fraudulent activities through pattern recognition in transaction data.

- Retail: Enhances customer experience by personalizing recommendations based on purchasing history.

These applications exemplify the versatility of neural networks, illustrating their potential to transform industries and improve efficiencies.

Challenges and Future Directions

While neural networks offer numerous benefits, they also present challenges such as interpretability, bias, and resource-intensive training. Addressing these issues is crucial for advancing AI responsibly.

Advancements in Neural Architecture Research

Future research focuses on developing more efficient architectures, reducing computational costs, and enhancing model interpretability. Techniques like transfer learning and federated learning are paving the way for more sustainable and scalable neural networks.

Conclusion

By exploring this comprehensive overview of neural architecture, you’ll gain valuable insights into AI’s transformative power. Embrace your curiosity as you delve deeper into how these models shape our world and drive innovation across sectors. Let neural networks inspire you to explore new possibilities in technology and beyond!